Disclaimer: I lost count of how many AI-generated responses to queries I generated in researching this blog post (compared to last week’s grand total of two). I can attest that the ease with which I can check whether a certain piece of information even exists is incredibly seductive. And I don’t like it.

Many people have asked me over the years how much time I spend writing my blog. I’ve never actually calculated any totals, but I generally set aside a day every week to do it. For the record, I never would have kept it up for this long if I didn’t genuinely enjoy research and writing. I like to think that readers enjoy it and take some value from it, but (in a very Randian style of selfishness [1]) I really do it for me. Spending this time regularly diving into complex topics lets me explore various perspectives and possible outcomes, all while figuring out what I think and feel about it. At its core, writing helps me process thoughts and emotions, which, in turn, influences how I approach the world.

Image credit: [2]

One part of the problem with exploring new concepts is with the limitations of search engines. While increasingly vast quantities of human knowledge are digitized, the internet does not have everything, nor is it always possible to find what you need. As I described in last week’s post, every search engine has limitations on what results it can find and/or the objectivity of those results. The other part of the problem is with the limitations of time. I have often lamented that there is not enough time in one human life to learn everything that is out there to be learned. Even as an adult I’m still grappling with the concept that I cannot do it all and that I have to choose where to spend my time and attention.

Delegation or Dependence?

I recognize that Artificial Intelligence can be extremely valuable in summarizing and even analyzing huge amounts of information in short amounts of time, but I also simply don’t trust it yet to do a good job. Many of the topics I research – both for my job and for fun – are complex, nuanced, fraught, and rapidly evolving. One of my biggest concerns about using AI to assist with research is that the AI may not yet have enough familiarity with sensitive and emerging subject matter to contextualize and summarize certain research accurately. That rationale is part of why we instituted an AI policy at my job: we are incredibly cautious about how we use it for research (noting when we do) and absolutely do not use it for content creation. (A friend of mine who works at a large software company you’ve definitely heard of was thrilled when I told her we had such a policy.)

To put it simply, I just don’t trust it to do as good a job as a human brain. Whenever I’ve read AI summaries of my own writing, I’ve found that it provides a fairly accurate summary of the text but no trace whatsoever of the subtext. I recognize that not every AI is created equal and that there are some very sophisticated models available that I haven’t used yet. [3] But in my experience, AI gets what something says but not what it means, which leads me to my bigger concern about using it: not so much that I’ll miss context in a summary, but that I’ll stop looking for it. Research requires discernment and critical thought, and I worry about simply forming my conclusions based on an AI’s conclusions and that I will eventually get so used to doing that that I will lose the capacity to question what I’m being told.

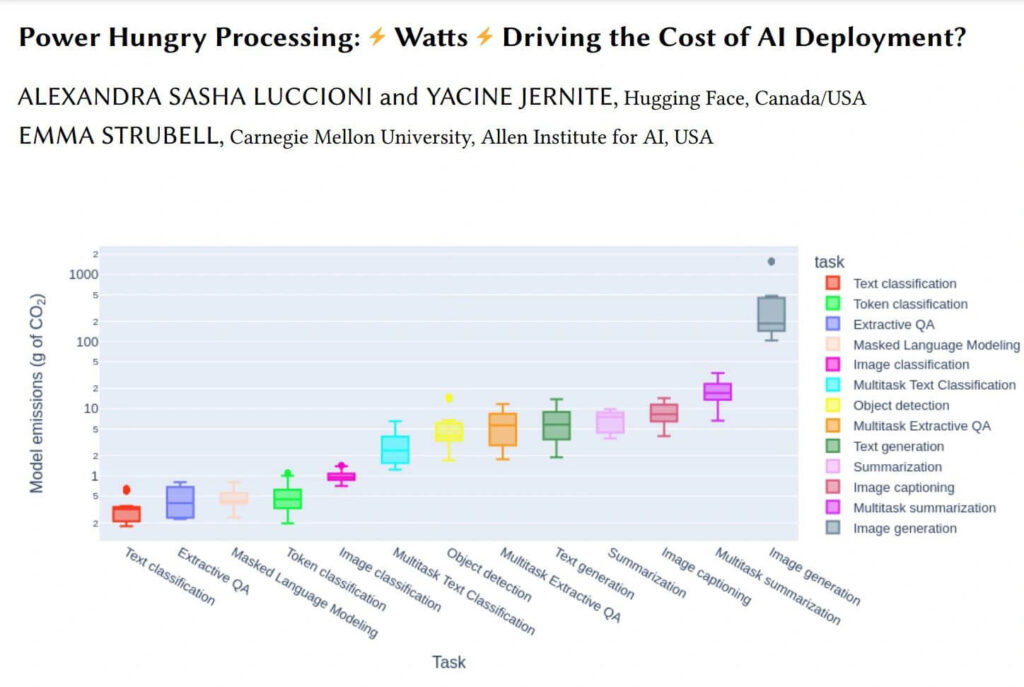

Image credit: [4]

I recognize that my brain is concocting a worst-case scenario, but it’s not an unrealistic one. There are plenty of people who believe whatever they read on the internet; who can’t tell a deep fake from reality, even if they’re looking for it (I scored 50% on this quiz – can you do better? [5]); who use search engines that are less likely to provide results that challenge their opinions (and don’t know or don’t care). “Discernment is still the realm of human thought,” said AI expert Oren Etzioni [6] when I heard him speak in January, but in order to be discerning, we need to really understand the state of the technology – to use it effectively and to understand how it can be used to manipulate us. In order to do that, he encouraged the audience to interact with AI (specifically Large Language Models, such as Chat GPT) every day in order to get a better sense of what it can and cannot do.

Mental and Environmental Impacts

I fundamentally agree with him, even though I don’t want to. I don’t like the incessant push for optimization of our lives, the idea that “more” and “faster” equate to “better.” I firmly believe that in order for us to retain qualities that are uniquely human, we need to nurture them – ideally slowly, quietly, and away from screens. [7] That said, I do recognize that it probably behooves us to understand what technology is available to us and how it works so we can more effectively choose when to use it (and when not to). And I would be more willing to do that if not for some of the looming negative impacts to the world at large that we can expect to see in the wake of the AI buildout.

AI use and related energy consumption are on the verge of skyrocketing. Deloitte projects that our estimated worldwide data center energy consumption in 2025 (over 500 Terawatt-hours) will nearly double by 2030 (to over 1,000 Terawatt-hours); that increase in demand will largely be driven by generative AI models. [8] As I mentioned in last week’s post, the difference between the energy it takes to find information and analyze information is massive. [9] A single Chat GPT query is believed to require almost 3 watt-hours of energy, about 10 times that of a Google query at 0.3 watt-hours. [10]

Image credit: [11]

This increased demand for energy to power AI will require more generation capacity, either on the grid or at the site of proposed data centers. The grid where I am located is still powered by approximately 60% fossil fuels, [12] and I can tell you that our part of Pennsylvania is not prioritizing renewable energy to meet this anticipated growth in demand, but fracked gas. [13] In fact, the largest gas-powered data center in the country has been proposed for our region, boasting a capacity of 4.5 gigawatts (about enough to power Manhattan). [14] The scale of these facilities – and the decision to power them with fossil fuels – is mind-boggling, and it leaves me with questions I have not yet been able to answer through my own research: How much of this demand is from individuals using Chat GPT to do research on the internet, and how much of it is for corporate and government entities? And why do we need this much data processing power?

~

I hope to learn more (with or without the assistance of AI-based research) as this new subject continues to evolve. I recognize that my own energy consumption (and subsequent guilt) in trying out generative AI for these last two blog posts represents a drop in the bucket compared to the rest of my own carbon footprint – and a drop in the ocean compared to the grand scale of how we as a global society use AI. Nevertheless, that still doesn’t stop me from asking myself – and you, dear reader – “how do we identify and focus on using what is truly necessary?”

But for now, I’d be curious to hear your thoughts on this subject. Do you use generative AI, and for what? Have you tried to limit your use or understand its impacts? I’m sure we’ll have plenty more to chat about in the future.

Thanks for reading!

[1] https://www.goodreads.com/book/show/859440

[3] https://www.youtube.com/watch?v=osven54J4so

[4] https://arxiv.org/abs/2311.16863

[5] https://www.nytimes.com/interactive/2025/06/29/business/ai-video-deepfake-google-veo-3-quiz.html

[6] https://en.wikipedia.org/wiki/Oren_Etzioni

[7] https://radicalmoderate.online/you-had-one-job-monotasking/

[9] https://radicalmoderate.online/in-search-of-a-better-search-engine

[10] https://www.sustainabilitybynumbers.com/p/carbon-footprint-chatgpt

[11] https://delawarecurrents.org/2025/07/28/pennsylvania-natural-gas/

[12] https://www.pjm.com/

[13] https://www.axios.com/local/pittsburgh/2025/04/16/ai-natural-gas-data-centers-pennsylvania

[14] https://www.axios.com/local/pittsburgh/2025/04/16/ai-natural-gas-data-centers-pennsylvania

0 Comments