As a self-proclaimed luddite, my happiest moments are times when I am nowhere near an electronic device (even though my most gratifying hobby involves hours of research and writing on a laptop every week…) While I am far from being an expert in Artificial Intelligence (AI), I’ve spent some time researching it and cultivating what I hope are decently informed opinions. In fact, I believe it’s a very good thing to spend some time learning about things that scare us – and AI was certainly scary to me this time last year.

I often like to point out the difference between things that are “dangerous” and things that are “scary.” The distinction was first brought to my attention in a documentary about nuclear energy that described nuclear as scary, while fossil fuels are dangerous; airplane travel is scary, while car travel is dangerous. [1] Some horrifying scenarios that are highly unlikely play with our imaginations, and our brains give more weight to the high stakes associated with a bad outcome than to the low probability of it actually happening. Meanwhile, we normalize legitimate dangers that we encounter on a daily basis and thereby downplay the relatively higher probability that they will come to pass.

Image credit: [2]

After doing my own research, listening to experts, and simply watching what is going on around me in the world, I have revised my previous opinion that AI is scary. As I concluded in my blog series from last winter, [3] Skynet and the subjugation of humans by AI overlords is a scary concept but not one I think will happen any time soon. In contrast, the thing that is truly dangerous is how much of our autonomy, creativity, and critical thinking skills we as humans regularly cede to AI systems. AI is not battling us for dominance; instead, many of us seem willing to give it control over our lives.

Level Setting

Let me back out from that extremely opinionated statement and acknowledge the fact that I make use of AI on a daily basis: online research, spell check, Google maps, Duolingo. These are tools that I use to get through most days with ease and hopefully a little self-improvement. That said, I try to limit my use of it to situations where I can clearly point to its function as a tool to assist me with something, not to do it for me. I love reading and writing – and I don’t want an AI to read or write for me. I love art – and I’d rather pay my artist friends to make something for me than have AI do it for free. I love music – and I want to bang my head against the wall after a few minutes of AI-generated cafe jazz on YouTube.

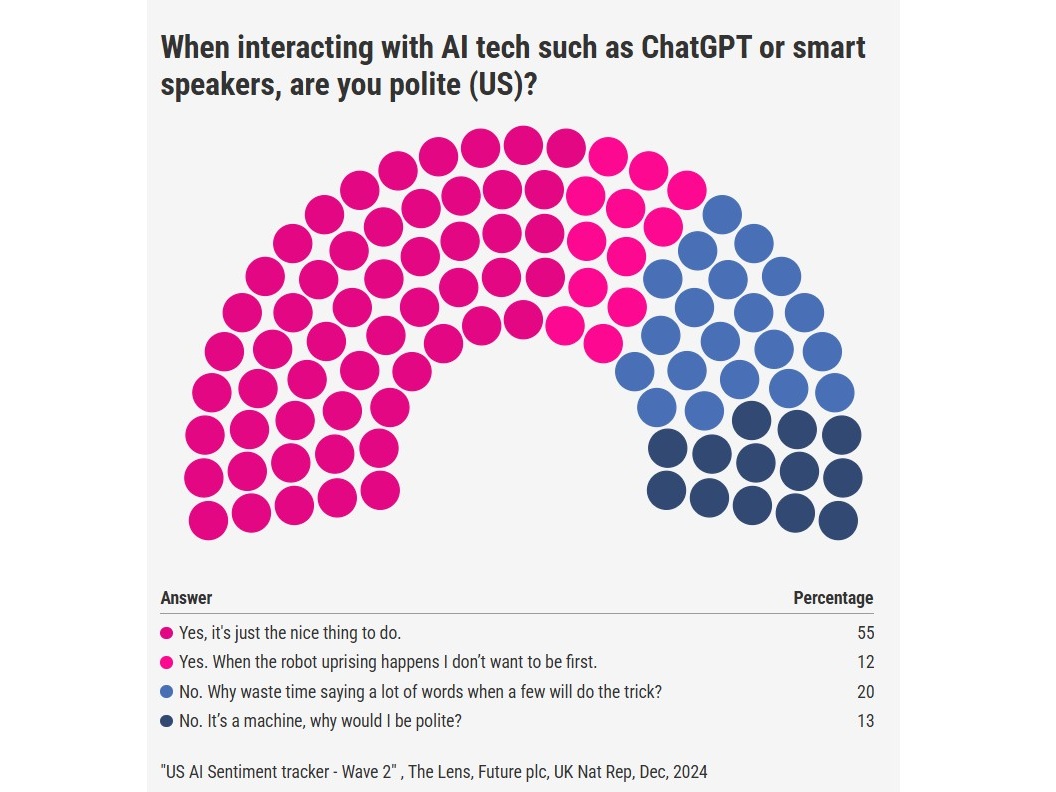

Recognizing that there are very specific functions for AI, including poring over the entire sum of digitized human knowledge whenever we have a curiosity about something, can be helpful for the purpose of better defining our relationship with it. Any tool can be used effectively … or not; it can be improved upon so it works better … or not. And how we’re using it – and interacting with it – has been the focus of some news stories this year. In April, Sam Altman of OpenAI was quoted as saying that it costs tens of millions of dollars more when people are polite to AI chat bots, i.e. saying “please” and “thank you,” because of all the additional processing time to evaluate those words across ever-growing numbers of requests. Altman also said that it was money well-spent because… “you never know.” [4]

Image credit: [5]

There is some debate around the likelihood of an AI uprising (and whether the machines will spare those who were polite to them before their rise to power), but Sam Altman, Bill Gates, and others who know their way around a computer have said that mitigating the risk of human extinction from AI should be a global priority. Computer scientist and founder of the Allen Institute for Artificial Intelligence, Oren Etzioni, [6] didn’t seem to think the stakes were that high when I saw him speak in January of this year. He explained that many of the biggest concerns we should have with AI are with the people using it, not with the AI itself, and that the biggest blind spots we have are on both ends of the opinion spectrum: people who are too deferential to AI and people who think they know better than AI. (I guess we know where I fall in that picture.)

Sharpening the Saw

There are some more logical arguments for why it makes sense to be polite to AI, not the least of which is the maxim “garbage in, garbage out.” Unfortunately, when AI chat bots are trained on broad internet content, they see fact-checked data, emotionally charged opinions, and outright bigotry, and they don’t necessarily know the difference or know “good” from “bad” — and, like kids tend to do while they’re still learning, they may just repeat it. [7] It’s worth considering the fact that the internet can be a pretty dark place these days. Training AI on our worst moments and using it to inform and crank out content faster than humans are capable of, which then impacts what humans and machines are seeing online, sounds like a recipe for a vicious circle.

Image credit: [8]

So if we are going to recognize and use AI as a tool, there are things we can do to make it a better, more effective tool. Asking better questions of it in the first place can lead to better responses. As I like to say about the scientific process, it only answers the questions we ask, so we need to make sure we’re asking the right questions. And the same can be said of AI. Also, as far as responses go, it appears that language models are more likely to be polite back when they identify polite language in a prompt; they also tend to mirror the clarity and detail of the prompts they receive. [9] In short, positive interactions with AI can lead to a virtuous (upward) circle.

There’s also a practical and ethical argument that being polite to everyone / every thing just keeps us in the habit of being decent people. I have certainly been less polite to AI customer service bots over the years, and I don’t think that has spilled over to my interactions with real humans, but who’s to say? With that said, however, it’s worth coming back to the costs: while I am not opposed to the idea of just being nice, the lingering concern I have is not the economic cost of Sam Altman’s tens of millions of “well spent” dollars, but the environmental cost of all the extra energy we’ve spent in potentially superfluous interactions with AI over the years. Again, I’m not saying it’s wrong, but I am saying it’s worth asking some probing questions. And we’ll pick up with where – and how – we ask some of our questions next week.

~

I’ll leave it there for now, but I’d love to hear what you think. Did I get something wrong, or do you disagree? Do you have opinions or facts to share?

Please leave your thoughts below!

And thank you for reading!

[1] https://www.nuclearnowfilm.com/

[3] https://radicalmoderate.online/man-vs-machine-ai-friend-or-foe/

[4] https://www.nytimes.com/2025/04/24/technology/chatgpt-alexa-please-thank-you.html

[5] https://www.reddit.com/r/meme/comments/13rfylt/representative/

[6] https://en.wikipedia.org/wiki/Oren_Etzioni

[7] https://www.cnn.com/2025/07/08/tech/grok-ai-antisemitism

[8] https://www.diplomacy.edu/blog/politeness-in-2025-why-are-we-so-kind-to-ai/

[9] https://futurism.com/altman-please-thanks-chatgpt

4 Comments

Rebecca Steele · September 7, 2025 at 9:21 pm

So is the bottom line —

AI SUCKS

Or

no one wants to be themselves or be real real?

There is a black hole (darkness ).

This is an awesome piece. I totally sank into this deep passion on this topic.

I can’t give enough recognition.

I do not like AI

The World needs to be very scared.

Alison · September 10, 2025 at 8:53 pm

I think the bottom line is – at least for now – AI is a tool. I am more afraid of the people who might use AI to manipulate others than I am of AI itself.

That said, if AI is on track to surpass our intelligence, it is learning from us… and we need to make sure it learns from our best qualities, not our worst.

palpediablog · September 8, 2025 at 11:59 am

Interesting column on AI by Thomas Friedman in the NYT last week: https://www.nytimes.com/2025/09/03/opinion/us-china-ai-trust.html?unlocked_article_code=1.kU8.J-zu.UOsa70XudAC6&smid=url-share

Alison · September 10, 2025 at 8:51 pm

Well, that piece is fascinating and terrifying. It really gets back to what we were talking about the other day – the US and Russia had a narrow window to cooperate on nuclear technology and avoid the Cold War… but with the rate AI is progressing, the window is probably narrower now with China, and now the technology could potentially make its own decisions someday.